In order to make good use of LLMs, it’s important to have augmentations

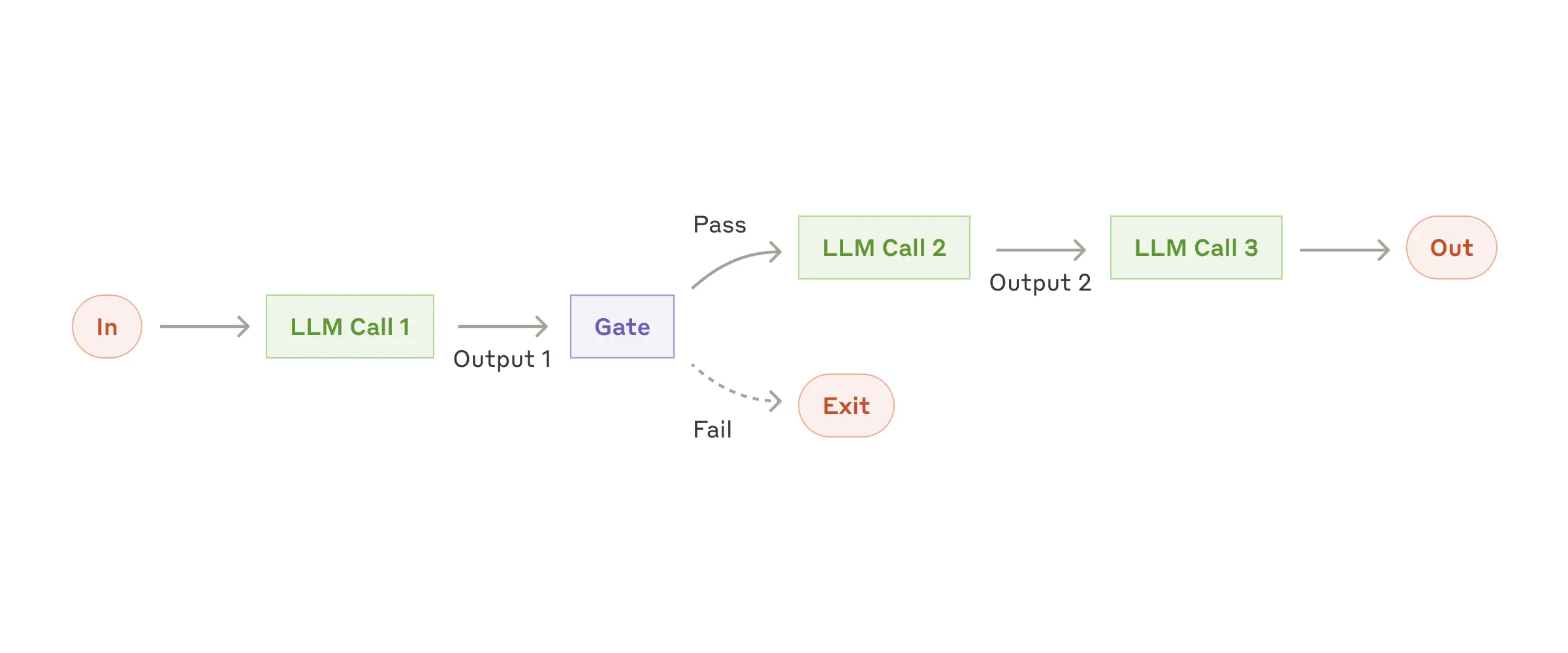

Prompt Chaining

Prompt chaining decomposes a task into a sequence of steps, where each LLM call processes the output of the previous one. You can add programmatic checks (see “gate” in the diagram below) on any intermediate steps to ensure that the process is still on track.

Ideal for situations where the task can be easily and cleanly decomposed into fixed subtasks

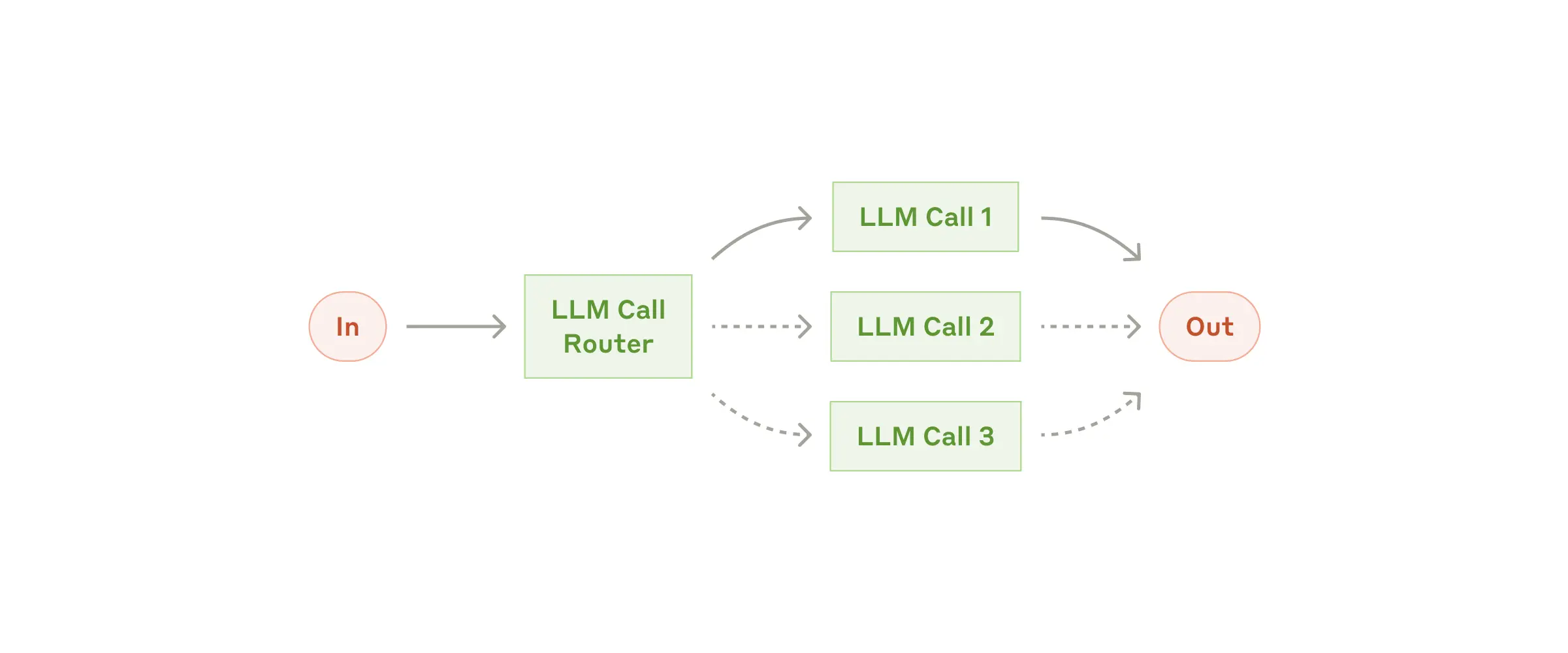

Routing

Routing classifies an input and directs it to a specialized followup task. Without this workflow, optimizing for one kind of input can hurt performance on other inputs.

Ideal for complex tasks where there are distinct categories that are better handled separately, and where classification can be handled accurately, either by an LLM or a more traditional classification model/algorithm.

Examples where routing is useful:

- Directing different types of customer service queries (general questions, refund requests, technical support) into different downstream processes.

- Routing easy/common questions to smaller models like Claude 3.5 Haiku and hard/unusual questions to more capable models like Claude 3.5 Sonnet to optimize cost and speed.

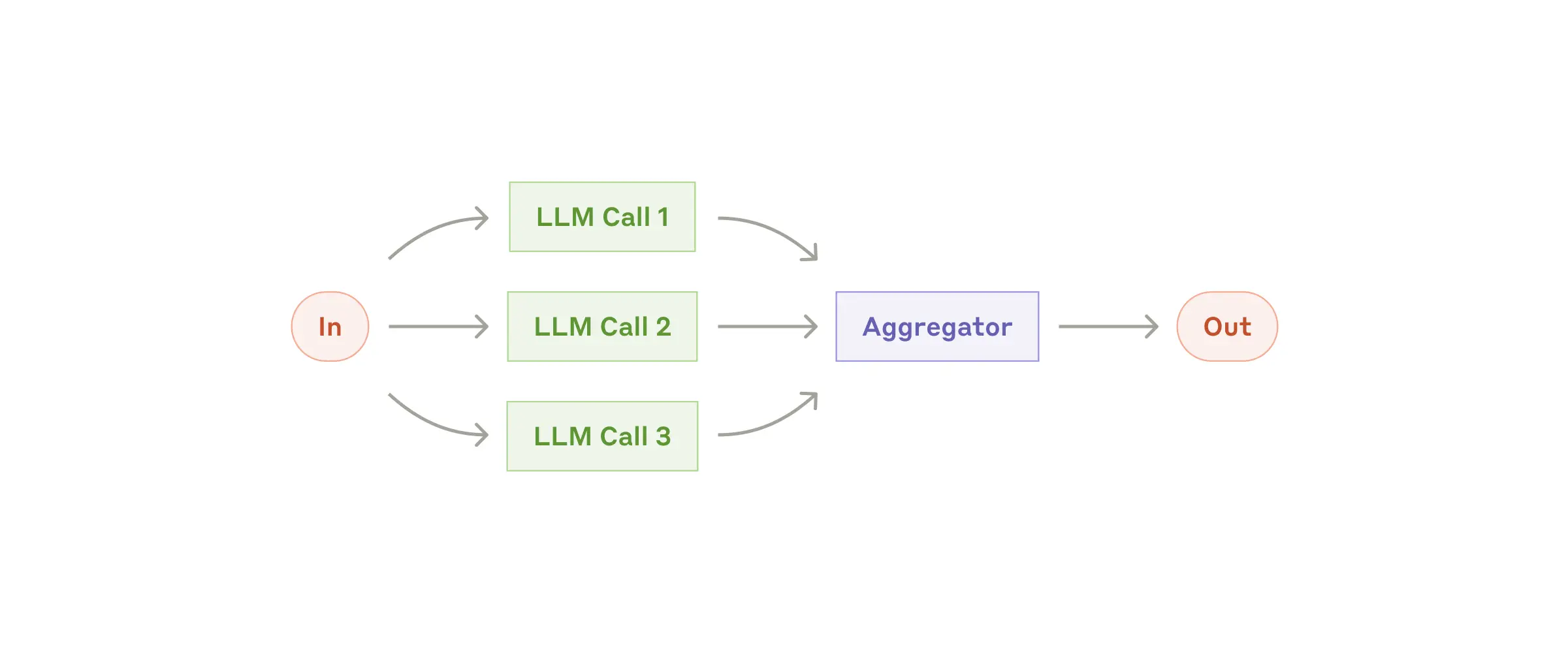

Parallelization

LLMs can sometimes work simultaneously on a task and have their outputs aggregated programmatically.

- Sectioning: Breaking a task into independent subtasks run in parallel.

- Voting: Running the same task multiple times to get diverse outputs.

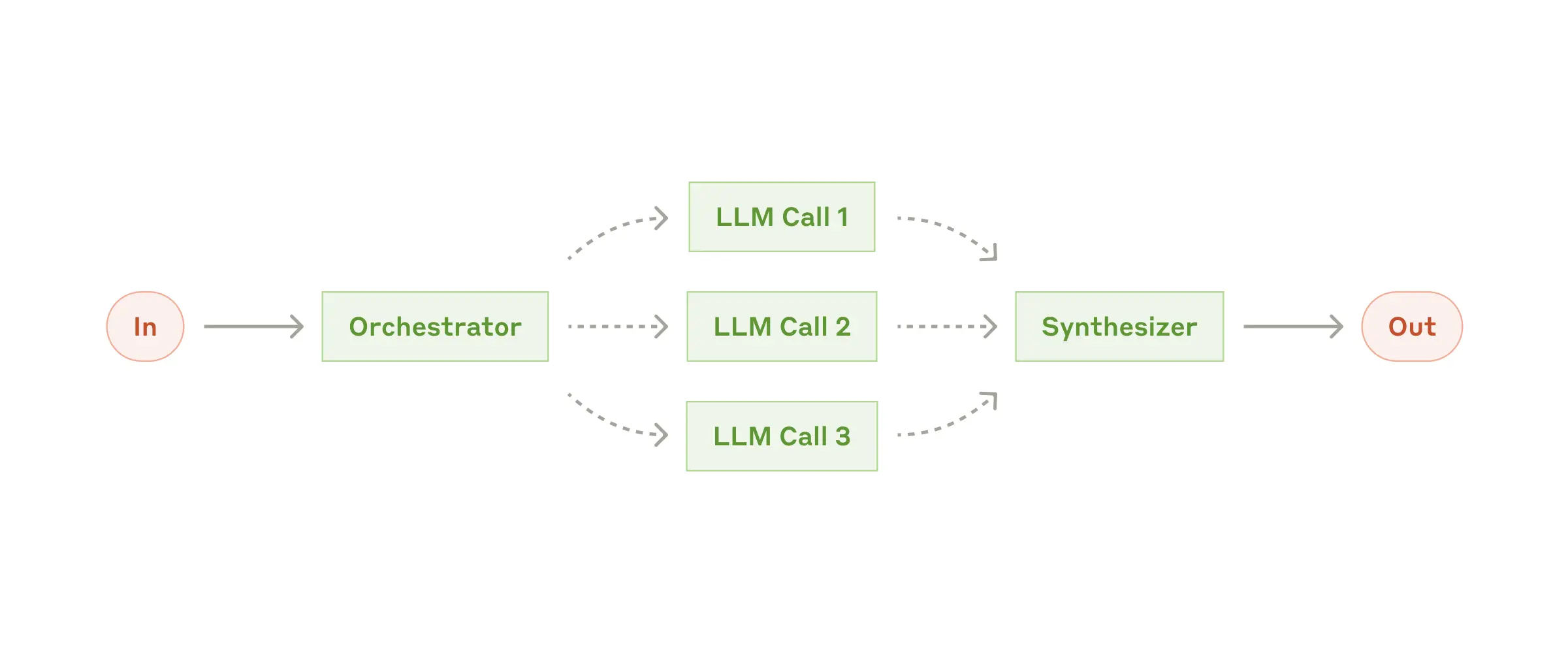

Orchestrator-workers

A central LLM dynamically breaks down tasks, delegates them to worker LLMs, and synthesizes their results.

Ideal for complex tasks where you can’t predict the subtasks needed, subtasks are determined by the specific input

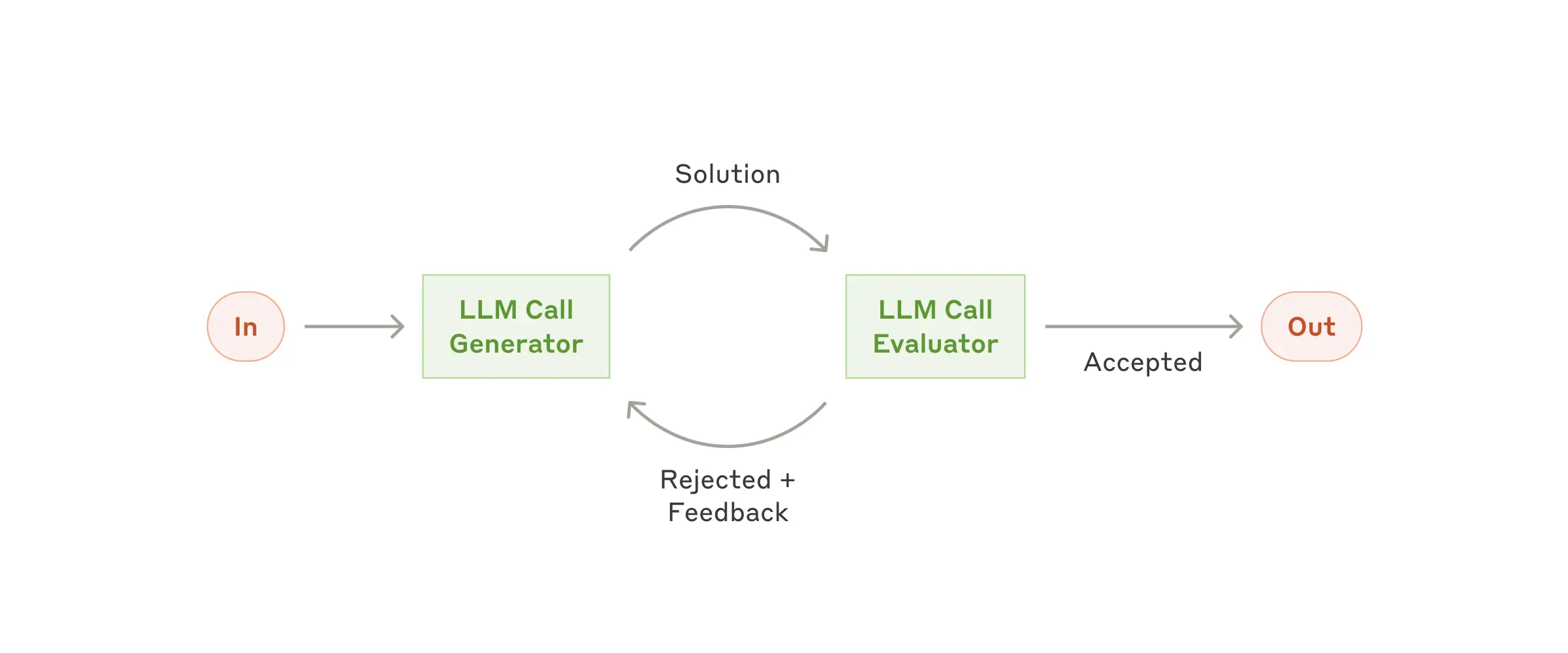

Evaluator-optimizer

- One LLM call generates a response while another provides evaluation and feedback in a loop.

Ideal when we have clear evaluation criteria, and when iterative refinement provides measurable value.